In everyday use, data acquired from a mobile platform for surveillance and other police or military operations is always useful. However, raw video data has its limits. Without a certain amount of enhancement, it is relatively restricted to real-time target acquisition only. For offline analysis, video capture can only really provide a limited set of data points. Without video georeferencing, the context of the video is also lost.

To avoid data collection from becoming a waste of time, the key is adding metadata that provides better quality data points and that all-important context too. That metadata, when presented in a more user-friendly way, can result in mission-critical information that can be exploited in real-time during an operation.

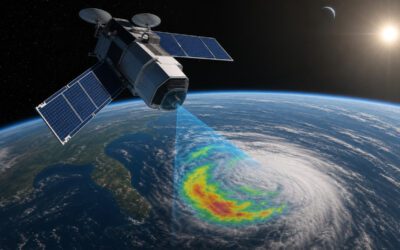

Integrated metadata collection and display need to be STANAG 4609-compliant and provide full motion video georeferencing that positions the platform within the landscape. This gives the data context and provides clearly identifiable geographic points of reference.

Why choose a standard-share system?

The benefits of a standard-share system that integrates with existing sensor options, such as STANAGE 4609, are obvious. Rather than having to retrofit an entire compliant system, individual ‘bolt-on’ software can be simply added as and when required. The level of metadata collected and the type will depend entirely on the operator’s requirements. As requirements change or develop, new data collection systems can be added to augment the sensor data collected by the existing STANAG set-up.

Not only is this quicker and easier, but it’s also more cost-effective, providing greater metadata collection without impacting operating budgets.

Real-time analysis

The crucial aspect for police operators is that data can be analysed quickly. With STANAG 4609 or Transport Stream data, the MPEG-2 data comprises elementary streams containing encoded video, audio and encapsulated metadata. The critical element is that this data can be sifted through quickly, with the relevant information coming to the fore without the operator having to wade through hours of useless information.

The metadata can be refined and ‘boiled down’ using algorithmic software to produce relevant information quickly and easily. This, in turn, can be incorporated into real-time operations, delivering a more user-friendly system of metadata distribution that all relevant users can access.

Software development

Metadata can include everything from geospatial positioning to a target vehicle’s direction of travel. With onboard missions, the key component is geospatial awareness. With FlySight’s OPENSIGHT Software Development Kit, everything from planning and execution to analysis and debriefing can be integrated and managed easily.

The flexibility of the software also allows operators to revise and develop Decision Support Systems for real-time threats. In a challenging and ever-changing world, this is crucial to allow police and military operators to respond accordingly to rapidly developing or changing situations. Awareness dissemination is enhanced with OPENSIGHT through the creation of a Common Operating Picture.

OPENSIGHT-Software Development Kits feature built-in interoperability operating parameters compatible with STANG and MIL-STD. The software is also capable of interfacing with current NATO systems. It also allows the integration of new systems from the ground up and grandfathering existing Legacy Command and Control systems into a cohesive and fully integrated operating system.

The advantages are clear – by providing operators with easily-interpreted metadata, a far more focused analysis of operations can be achieved in a much shorter space of time. This allows commanding officers to make decisions based on a far larger data set that is both accurate and real-time focused.

Multi-layering of heterogeneous sensors includes everything from traditional infra-red, radar and SONAR through to electro-optical and LIDAR data collection processes, giving analysts a broad spectrum of relevant data to work with that is far more detailed.

The system can also build up layers of information from multiple sources and add augmented reality data points such as location addresses and nodal data on specific targets. This gives operators the best possible interpretation of a real-time situation without all that annoying background clutter.